Student Blog

Mining Hyperproperties using Temporal Logics

Eleonora Nesterini, 2023-09-09

Our work has been recently accepted for publication at EMSOFT 2023, one of the most relevant conferences for cutting-edge research in the design and analysis of software interacting with physical processes. In this paper, we presented the first method that automatically learns hyperproperties of real-valued signals.

Read more ...

Formal specifications play a pivotal role across the entire lifecycle of complex systems, beginning with the design phase where they establish unambiguous and rigorous requirements. These specifications continue to be indispensable in the operational phase, where they enable the automation of intricate tasks such as monitoring and anomaly detection. However, formal specifications are often unavailable or challenging to define, underscoring the significance of specification mining techniques that can automatically infer specifications from system executions. Until now, existing specification mining methods have focused on learning properties defined over single system executions, while hyperproperties, which extend properties by establishing relationships across multiple distinct executions, have been neglected due to their greater complexity. Consequently, many relevant system characteristics, such as robustness or security policies, could not be learned from data.

With our paper, we filled this gap in the literature. By combining insights from the program synthesis research field with established parameter learning methods, we also proposed the first mining approach that allows the user to regulate the amount of knowledge to embed in the process. The outcome is a surprisingly flexible method applicable in a plethora of applications from different domains.

BoundMPC: Cartesian Path Planning with Error Bounds based on Model Predictive Control in the Joint Space

Thies Oelerich, 2023-09-01

We introduce a novel online model-predictive trajectory planner for robotic manipulators known as BoundMPC. This planner enables robots to navigate reference paths smoothly while ensuring collision avoidance. It accommodates not only position and orientation adjustments but also includes waypoints along the way. The robot’s hand can follow a path closely, aiding obstacle avoidance and maintaining a safe trajectory.

Read more ...

In existing literature, the concept of decomposing path error into two components is well-established. One component tracks path progression tangentially, while the other quantifies deviations from the path in an orthogonal direction. This approach has traditionally been applied to position control. In our work, we extend this concept to orientation control, leveraging the principles of Lie theory for rotations. Additionally, we introduce a method to partition the orthogonal error plane into basis directions, simplifying the definition of asymmetric Cartesian error boundaries. Employing piecewise linear position and orientation reference paths, our approach is computationally efficient and allows real-time trajectory adjustments during the robot’s motion. This adaptability empowers our planner to excel in dynamic environments or when the robot faces new objectives.

BoundMPC has undergone testing with a robot in two distinct scenarios. In the first scenario, the robot maneuvers a large object through a confined space, involving tilting maneuvers. In the second scenario, the robot retrieves an object from a table while avoiding collisions with nearby obstacles.

Glimpse: On-Demand PoW Light Client with Constant-Size Storage for DeFi

Giulia Scaffino, 2023-06-30

In the current landscape, each blockchain operates like a separate country where people speak their unique language and don’t understand others. Our objective is to create a translator, a specialized protocol, that bridges the communication gap between these distinct blockchains. When transferring an asset from one blockchain to another or executing a smart contract involving different blockchains, such a protocol helps ensuring that data is transmitted correctly and securely between them.

Read more ...

I introduced Glimpse, a groundbreaking cross-chain communication protocol designed for PoW blockchains. What sets Glimpse apart is its ability to simultaneously achieve security, practicality, efficiency, and compatibility with a wide range of chains, all while offering enhanced expressiveness. Specifically, Glimpse ensures atomicity by only verifying and storing on the destination chain a constant amount of data relative to the source chain’s length. This results in minimal on-chain costs, making it accessible even to chains with limited scripting language capabilities.

Furthermore, Glimpse opens the door to a multitude of real-world applications within the DeFi sector. These include cross-chain lending, Proofs-of-Burn, token wrapping and unwrapping, as well as off-chain applications. Glimpse, in essence, represents a significant advancement in cross-chain communication protocols, enabling a wide range of innovative possibilities within the blockchain ecosystem.

Starting from the idea of Glimpse, we have now designed Blink, a new provably secure super-light client, which allows to read the state of a PoW blockchain efficiently, securely, and trustlessly. This makes Blink the perfect tool for applications such as wallets, which read the current state of the chain in order to let users know their account balance.

In the future, my aspirations include developing bridges for Proof-of-Stake blockchains.

Detection of Anomalies in Network Traffic

Lisa Geiginger, 2023-06-16

In our research, we propose a novel framework to manipulate botnet traffic with the intent to fool various machine learning models trained on the detection of malicious botnet traffic. Firstly, we show, that adversarial botnet samples can be crafted which evade the detection of the ML classifier while still remaining valid botnet samples. That means that no protocol rules are broken and that the botnet attack is still feasible. The validity of those samples is checked via an advanced plausibility check filter. Secondly, we show that no further knowledge about the ML-based intrusion detection system is needed in order to fool the model, i.e. no knowledge about architecture and not even Oracle access is required.

Read more ...

Botnet detection remains a challenging task due to many botnet families with different communication strategies, traffic encryption, and hiding techniques. Machine learningbased methods have been successfully applied, but have proven to be vulnerable to evasion attacks. We show how an attacker can evade the detection of botnet traffic by manipulating selected features in the attack flows. We first build two well-performing machine learning models - Random Forest and Support Vector Machine - trained using only features available in encrypted traffic. We then show with two different data sets how the detection rate (recall) decreases significantly for both models if only a few basic features are manipulated by the attacker. We apply two state-of-the-art evasion attacks: Hop Skip Jump and Fast Gradient Sign. For all manipulated attack vectors we perform a plausibility check to ensure consistency with traffic statistics and protocol rules as well as a bot check to ensure the manipulated attack vectors are still valid bot samples.

Our research demonstrates that the machine learning algorithms can easily be fooled if the attacker is able to test the black-box models numerous times. Since in a real setting, attackers may not have access to the model and training data, we investigate if the generated attack samples are transferable to other machine learning models trained with different training data. Our results show, that samples generated with Hop Skip Jump generally do not transfer well to other models while Fast Gradient Sign samples generally also evade the detection of models different from the one they were customized for.

WebSpec: Towards Machine-Checked Analysis of Browser Security Mechanisms

Lorenzo Veronese, 2023-05-22

The Web Platform is constantly evolving, and the number of Web components, i.e., browser functionalities and security mechanisms, continues to increase, driven by the update and introduction of new Web Specifications. Browsers, i.e., the implementations of such standards, are tested for compliance by means of an extensive test suite called Web Platform Tests (WPT). However, specifications are only manually reviewed by experts to identify security issues.

Read more ...

This manual process may overlook logical flaws for two main reasons. First, web specifications are usually very large and informally specified. Second, Web components are mostly evaluated in isolation without considering their composition and possible interactions. For this reason, we developed WebSpec, a framework for the formal analysis of browser security mechanisms allowing for the automated discovery of logical flaws and the development of machine-checked security proofs.

WebSpec includes a comprehensive model of the web browser in the Coq proof assistant, the formalization of 10 Web security invariants, and a toolchain to enable model checking with the Z3 theorem prover. If Z3 discovers a violation, WebSpec generates executables tests that can be validated across all major browsers. In this work, we discover two new logical flaws and verify the correctness of our proposed changes to the Web platform.

Neural Network Verification

Anagha Athavale, 2023-05-16

Neural networks have encountered tremendous success in the recent past due to their ability to infer highly nonlinear relations from data, learn accurate predictive models and make smart decisions with little or no human intervention. However, the correctness of neural networks remains a major concern. This is especially the case for safety- and security-critical applications where errors and biases can have serious undesired consequences, such as in medical diagnosis, self-driving cars and financial systems. There is an urgent need for methods that can provide guarantees about the behaviour of neural networks. A natural solution to this problem is the use of automatic verification techniques.

Read more ...

Existing methods of verification focus on a very limited property (local robustness) of neural networks. We have set out to give a better solution to this by designing a generalized method for neural network verification which gives stronger guarantees and is more expressible by using hyper-properties. Our approach will help ensure that neural networks are utilized in the best way possible eradicating the current concerns that come with them.

SMT Solving over Finite Field Arithmetic

Thomas Hader, 2023-04-24

Many zero-knowledge proof technologies for blockchains (e.g. zk-SNARK) are using finite field polynomials to encode various high-stake applications such as digital currencies. Verification of such applications is of uppermost importance as even minor errors can lead to significant (financial) loss. To perform such verification tasks, reasoning over finite field polynomials is essential.

Read more ...

In our publication SMT Solving over Finite Field Arithmetic we present one of the first automated decision procedures for formulas over finite field polynomials. We utilize well-proven SMT solving techniques to craft a novel SMT theory for finite field polynomials to be used by future verification endeavors for zero-knowledge proof applications.

Automation in Cryptographic protocols

Simon Jeanteur, 2023-04-07

Protocols are small pieces of software at the interface of systems that let components talk to each other. Within this broad class of software, we find cryptographic protocols that rely on cryptographic primitives to ensure extra properties (e.g., the secrecy of the communication, authentication of one of the participants, etc…).

Read more ...

Protocols are ubiquitous in our digitalized life, we rely on them for almost everything from authenticating to our wifi router, to exchanging data with most websites. However, they are famously error-prone in design. For instance, previous versions of the TLS protocol (used to secure communication on the web) were repeatedly broken. Hence a strong need for computer-verified proofs. And, why not, fully automated protocol verification?

Many battle-tested tools already exist to tackle this problem (most famously ProVerif and Tamarin). Most of them, however, work within a model that (mostly) underestimates the capabilities of a real malicious agent. We set out to fix this flaw by designing an automated protocol verifier sound against the real-world attacker. Limited automation in this model already exists with the tools EasyCrypt and CryptoVerif. Our tool reasons with the so-called BC-logic (from the name of its creators: Gergei Bana and Hubert Comon-Lundh), which gives us a more automation-friendly approach than previously existing solutions. This lets us do symbolic proofs and rely on existing efficient first-order theorem provers to derive computational results.

Disentangling Decentralized Finance (DeFi) Compositions

Stefan Kitzler, 2023-03-30

Decentralized Finance, or DeFi, refers to a set of financial services and applications that operate on blockchain-based networks, primarily utilizing smart contracts. The innovative character of DeFi lies in the ability to reuse existing code, enabling the construction of services atop one another and, consequently, facilitating the creation of complex compositions of DeFi protocols in a single transaction.

Read more ...

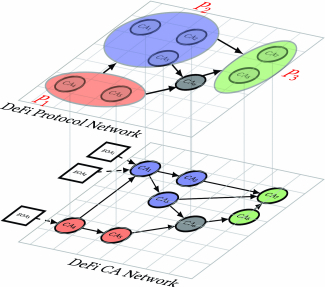

Ethereum is currently the most important distributed ledger technology (blockchain) for DeFi services. It differs from the Bitcoin blockchain conceptually as it implements the so-called “account model” with two different account types. An externally owned account (EOA) is a “regular” account controlled by a private key held by some user. A code account (CA), which is synonymous with the notion “smart contract,” is an account controlled by a computer program, which is invoked by issuing a transaction with the code account as the recipient. In our analysis, we want to understand and discover relations between DeFi protocols and associated CAs. For that purpose, as shown in Figure, we constructed networks consisting of DeFi traces on two abstraction levels: the lower-level DeFi Code Account (CA) network and the higher-level DeFi Protocol network.

Schematic illustration of constructed networks

Our research began by gathering data from 23 DeFi protocols and their 10,663,881 associated Ethereum accounts to analyze their interactions based on transactions data from Ethereum. From a network perspective, we observe a high degree and centrality of decentralized exchanges and lending protocols, with interactions primarily occurring within a strongly connected component between them. Notably, traditional community detection methods proved insufficient for disentangling these interactions.

To address this challenge, we propose an innovative algorithm designed to decompose the building blocks of DeFi protocols within single transactions. Our findings reveal that these building blocks can be nested, illustrating how components of DeFi protocols interconnect in complex structures across multiple protocols.

To demonstrate the practicality of our approach, we present a case study inspired by the recent collapse of the UST stablecoin in the Terra ecosystem. Under the hypothetical assumption of a similar fate for the USD Tether stablecoin, we investigate which DeFi protocols would be affected and examine their dependencies on USD Tether. In summary, our results and methodologies contribute to a deeper understanding of this emerging family of financial products.

Unmasking APTs: An Automated Approach for Real-World Threat Attribution

Aakanksha Saha, 2023-03-23

Attributing a malicious sample to a responsible entity is demanding, time-consuming, and often erroneous. Our research explores a novel attribution approach tailored to perform campaign-level and group-level attribution. The two-tiered strategy effectively addresses the persistent issues of inconsistency in APT nomenclature in the cybersecurity community and streamlines the threat analysis efforts in identifying APT groups and campaigns.

Read more ...

By automating feature extraction for heterogenous file types, providing reliable datasets and employing state-of-the-art clustering techniques, our work serves as a source of stimulation for future research in this critical field. Attribution of Diverse APT empowers security practitioners with automated tools to enhance attribution analysis and evaluate APT samples’ origins, advancing the cybersecurity practices.

ALASCA: Reasoning in Quantified Linear Arithmetic

Johannes Schoisswohl, 2023-01-30

Automated theorem proving is the task of showing that a formula follows from a set of assumptions. Formulas can be given in different input languages, which we call logics. Different logics allow to talk about different properties, depending on which “languages features” these logics have. Two important features are quantifiers (∀, ∃), which allow expressing properties like “for all x the property φ”, or “there is an x such that φ holds”, and interpreted arithmetic operations which allow us to talk about numbers.

Read more ...

Combining these two language features is notoriously difficult, and impossible to achieve in a complete way. In our paper we introduce an algorithm that combines these two features that gives strict guarantees about what we can prove and that out-competes all the state of the art algorithms in this area.

The calculus we introduce can deal with very general problems that contain arithmetic, quantifiers and uninterpreted functions, which means it can be used for a large number of problem domains, like verifying the correctness of soft and hardware systems, or proving security thereof.

Satisfiability Modulo Custom Theories in Z3

Clemens Eisenhofer, 2023-01-15

Satisfiability Modulo Theories (SMT) solvers are programs that can decide whether there are values for variables such that a given input formula evaluates to true. In simple terms, SMT solvers help us solve “puzzles” involving logical and mathematical constraints by determining if there is a way to make everything fit together. In security analysis, SMT solver are often used as parts of “detective tool” that helps uncover vulnerabilities and weaknesses in computer systems or software. It can analyze the system’s rules and constraints to find out if there are any combinations of actions that can bypass or break through the security measures. For instance, an SMT solver can help determine if there are inputs or actions that would allow someone to log in without the correct password or access sensitive data they shouldn’t have access to. It does this by exploring various scenarios and trying to find ways to make the system’s rules contradictory or inconsistent, which would indicate a security flaw.

Read more ...

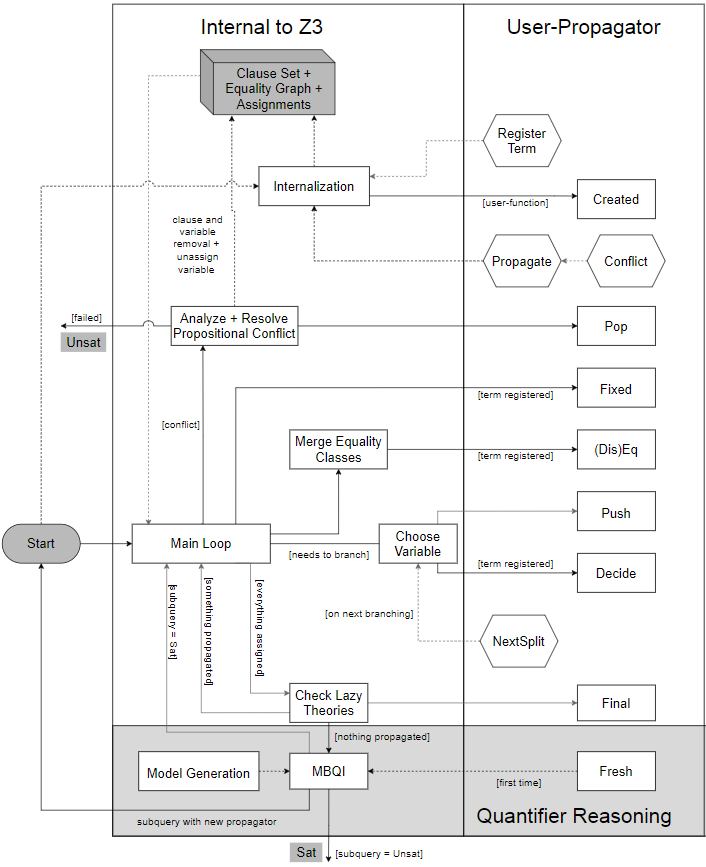

In contrast to SAT solvers, the constraints in SMT do not necessarily include only Booleans (true or false), but possibly also “theory variables and theory constraints”. These theories may be, for example, integers or real (e.g. Find some “x” and “y” out of the positive integers such that x + 2y < y * y ∧ x / y > y) but can be also more exotic ones like partial orders or sequences. The high efficiency of SMT solvers relies on sophisticated decision procedures for the supported theories and their operators (such as +, *, <). Until now, if no decision procedure is built in, users either had to use quantifiers to model the theories themselves, which usually results in very poor performance and non-termination, or they had to modify the solver’s code base, which is quite cumbersome and requires deep knowledge of the solver’s internals.

We introduced the so-called “user-propagation” framework in Z3, one of the leading SMT solvers. It allows its users to implement their own domainspecific decision procedures for new theories, as well as heuristics and lazy formula encodings, directly in Z3 without having to recompile the solver or deal with implementation details of Z3. This can be achieved by providing a handful of (optional) event handling functions (see Figure) that are called by Z3 during reasoning as soon as a potentially relevant event occurs. These functions can return additional information and hints to the solver in these cases (e.g. conflicts or branching suggestions). We have shown that we can easily outperform “Vanilla Z3” in terms of memory usage and reasoning time by providing only a few (self-programmed) problem-specific functions. This way, we can solve even more problems via SMT reasoning that could not be solved before.

Event handling functions

Solving Invariant Generation for Unsolvable Loops

Marcel Moosbrugger, 2022-12-15

Probabilistic programs are useful to model and better understand many complex models from vastly different domains, such as biology, robotics, or economics. For instance, autonomous vehicles are always subject to uncertain events like winds or erroneous sensors and can be modeled as probabilistic programs. The inherent randomness in these models makes their automated analysis difficult. Nevertheless, recent works established a technique to compute the exact moments (e.g. expected value and variance) of program variables in probabilistic loops. In the case of autonomous vehicles, this corresponds to the expected trajectories and the average deviation thereof. However, these techniques only work if the arithmetic used in the given programs is simple, which means polynomial. Unfortunately, many real-world phenomena exhibit dynamics beyond what can be modeled with plain polynomial arithmetic.

Read more ...

In our recent paper published at QEST 2022, we were able to lift this restriction and provide a solution to compute moments of probabilistic loops that were considerably beyond the state-of-the-art. Our approach combines recently established techniques from program analysis with the mathematical method of polynomial chaos expansion. Many real-world systems, such as autonomous vehicles, can only be modeled using general non-polynomial functions. Our paper opens the door for the automatic and exact analysis of such systems to make them safer.

Holo-Block Chain: A Hybrid Approach for Secured IoT Healthcare Ecosystem

Asad Aftab, 2022-11-15

The Internet-of-Things (IoT) is a tangible and imminent technology that enables the connectivity of smart physical devices with virtual objects across different platforms through the internet. This technology is currently undergoing significant experimentation to enable distributed operation, making it particularly suitable for integration into the healthcare ecosystem. However, within the IoT healthcare ecosystem (IoT-HS), the nodes of IoT networks are exposed to heightened security threats due to the handling of sensitive and personal data. Regulating an adequate volume of sensitive and personal data, IoT-HS faces various security challenges, necessitating a distributed mechanism to address these concerns. Blockchain, with its distributed ledger, is integral to solving security concerns in IoT-HS but presents issues such as extensive storage and computational requirements. In contrast, Holochain, with low computational and memory demands, lacks authentication distribution availability.

Read more ...

Our paper proposes a hybrid Holochain and Blockchain-based privacy perseverance and security framework for IoT-HSs that combines the benefits of both technologies, overcoming computational, memory, and authentication challenges. This framework is particularly well-suited for IoT scenarios where resource optimization is essential. A comprehensive security and performance analysis is conducted to demonstrate the suitability and effectiveness of this hybrid security approach for IoT-HSs compared to Blockchairi-only or Holochairi-only based approaches.

The framework introduced in this work breaks new ground by combining two prominent technologies, Holochain and Blockchain, in an innovative way, offering significant improvements over conventional approaches to IoT security. Holochain helps reduce storage capacity requirements, while Blockchain ensures data integrity and security during data transfer among legitimate users. This framework enhances data security and efficiency, holding significant relevance across various IoT applications, from the industrial sector to healthcare. In the healthcare sector, it effectively addresses critical challenges related to patient data confidentiality, integrity, and real-time data processing. These contributions improve the overall reliability and trustworthiness of IoT networks, making them more suitable for critical healthcare applications.

This Is the Moment for Probabilistic Loops

Marcel Moosbrugger, 2022-10-31

Classical computer programs are usually deterministic and nothing is left to chance. However, many real-world systems are riddled with uncertainty. For instance, a sensor of a robot can fail with some probability or a self-driving car can experience some unpredictable wind. Probabilistic programs incorporate uncertainty directly into the program code and allow us to model such systems. Despite the inherent uncertainty of these models, it is imperative to know that they function correctly. For instance, one would like to know that the expected trajectory of an autonomous vehicle avoids a forbidden area.

Read more ...

In our paper, we developed a new exact algorithmic technique to compute the expected values of variables in probabilistic loops. Loops are the most challenging aspects of the automated analysis of programs. Our technique is based on the theory of recurrences and is able to analyze stochastic systems from many different areas.

Moment-Based Invariants for Probabilistic Loops with Non-polynomial Assignments

Andrey Kofnov, 2022-09-16

Many real-world dynamic systems often involve randomness and can be likened to computer programs. These programs contain intricate mathematics that is not straightforward to handle. We’ve devised intelligent techniques to gain a better understanding of how these complex, evolving systems function. To do this, we’ve created two methods that allow us to extract vital insights without the need for extensive simulations. One method is highly precise and works exceptionally well for programs that involve trigonometry and exponential mathematics. The other method, while somewhat approximate, is versatile and applicable to a wide range of commonly used mathematical models. We employ a mathematical approach to simplify the study of these programs.

Read more ...

We’ve rigorously tested our methods in various scenarios, such as analyzing financial decisions and studying the movement of objects in unpredictable environments. Our findings consistently demonstrate the high accuracy and reliability of our predictions. This approach stands out as superior to current practices in the field.